Restricted Boltzmann machine parameters for a product distribution

2018-11-21A restricted Boltzmann machine (RBM) is a tool for modelling discrete probability distributions. Specifically, it's a collection of numbers (weights and biases , ), along with algorithms for training (setting the weights and biases to reflect the desired distribution) and sampling (obtaining samples that are distributed according to the desired distribution).

The joint probability distribution for an RBM is

Here, the vector (resp. ) represents the visible (resp. hidden) units, and each element (resp. ) can take on the values and .

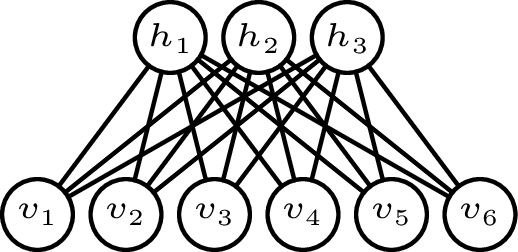

Pictorially, this looks like the following:

In this example, there are visible units, hidden units, and weights connecting them.

In this example, there are visible units, hidden units, and weights connecting them.

The hidden units are an artifact of the model; the actual distribution being modelled is only for the visible units . The effective distribution that we obtain from the RBM is the marginal distribution where the sum over is understood to run over all states of the hidden layer. The question that I have in mind is the following: What are the appropriate weights and biases so that is a product of univariate distributions over ?

We can express the marginal distribution as

The are in general coupled via the weights, so we will make a simplifying assumption: we require that the weight matrix have no more than one non-zero entry in each row and each column.

Without loss of generality, we can place these entries on the diagonal and call them , since that only amounts to rearranging the visible and hidden units.

If , the weight matrix then has the form

if , it has the form

and if , it has the form

Thus, under this assumption only the first visible and hidden units are allowed to be connected, and only pairwise.

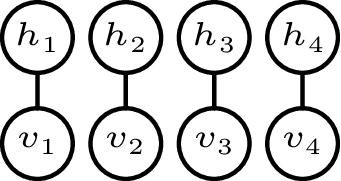

For brevity, we will assume in the following that , which looks like

This detangled layout already suggests to us that the visible units should act independently of one another.

This detangled layout already suggests to us that the visible units should act independently of one another.

That this is indeed the case is very easy to show rigorously. With a square and diagonal weight matrix, the marginal distribution simplifies to which is the product of univariate distributions, as desired: Thus, there is a straightforward answer to the question: It's sufficient (but possibly not strictly necessary) to have no off-diagonal weights.

Because a univariate distribution over and is a Bernoulli distribution, we have decomposed the marginal distribution into a product of Bernoulli distributions, each specified by a single parameter Hence, we see that we have three knobs to turn independently for each visible unit :

These results make intuitive sense:

- To guarantee an outcome, we can bias the visible unit strongly in the appropriate direction.

- A very large negative bias on a hidden unit renders that unit irrelevant, so its weight ceases to matter.

- A very large weight between a visible unit and the corresponding hidden unit increases the likelihood of a , even if that hidden unit is negatively biased.

- A weight of zero makes the hidden unit useless, and its bias becomes immaterial. In this case, we can tune to get a specific probability; for example (a fair coin flip) can be achieved with .

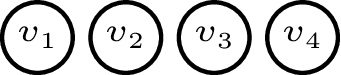

The last of these observations corresponds to the trivial layout

where we have removed the hidden units entirely.

where we have removed the hidden units entirely.